Max bitrate I have over achieved is 50. If you use a Mac the video data bitrate is automatically displayed each time you play a video. 50 is the max I have seen for the Typhoon. Oddly enough Phantom drones will show 60. Honestly, it's nothing to lose sleep over.can someone please just clarify this for me.

on the Box of typhon h is stated that the cam produces 60mbits.

In several threads I read that its not capable of doing it.But on a recent video I made it showed me around 168000kbps! Isn't that around 168mbits or am I getting something wrong?!

thx for your guys reply

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

cgo3+ mbits?!

- Thread starter Heath75

- Start date

DerStig and Tuna, at the end of the day it's all moot anyway. Any video you shoot is to be communicated to others. Almost no one has enough bandwidth to stream 4K. Netflix for example requires a steady internet connection speed of 25Mbps to do it. The reality is that true 4K streaming can’t take place at even 12-15Mbps unless there is a 40% efficiency in encoding going from H.264 to HEVC and the content is 24/30 fps, not 60 fps. Once a true 60Mbps 4K video from a high quality camera is down scanned and re-encoded so it can be streamed to the masses who cares what it came from. Most of that great quality is lost unless all you plan on doing is viewing your own MP4s on your own PC.

To quote a portion of a Wired article -

"Even after the 4K streams have been optimized at the source, they’ll still require at least two to three times the bandwidth you’d need today to watch a 1080p HD feed. This is a problem that the industry can only solve by reorganizing its infrastructure, something that requires not only a significant capital investment, but also a lot of time. It will likely take years for cable providers to provision enough bandwidth on existing systems to deliver 4K video from the networks."

"Pulling in a 4K signal over the air should also be possible, but it will take years if it happens at all. First, major networks will need to decide to broadcast content in 4K and upgrade their equipment. Then they’ll need to get on the same page regarding next-generation broadcasting technologies."

To quote a portion of a Wired article -

"Even after the 4K streams have been optimized at the source, they’ll still require at least two to three times the bandwidth you’d need today to watch a 1080p HD feed. This is a problem that the industry can only solve by reorganizing its infrastructure, something that requires not only a significant capital investment, but also a lot of time. It will likely take years for cable providers to provision enough bandwidth on existing systems to deliver 4K video from the networks."

"Pulling in a 4K signal over the air should also be possible, but it will take years if it happens at all. First, major networks will need to decide to broadcast content in 4K and upgrade their equipment. Then they’ll need to get on the same page regarding next-generation broadcasting technologies."

Yuneec originally advertised 100Mbps. the CGO3+ actually tops out at 50Mbps in the heavily pre-processed "Gorgeous" mode. 40-42Mbps in "Natural" mode and 29-32Mbps in their interpretation of "Raw" (Actually a bad implementation of Log)

You may wish to update your numbers above. Ever since the September firmware update I can say for certain that Natural mode is now up to 49 to 50 Mbps. Have not tried the RAW recently but I would expect that has increased as well.

D

DerStig

Guest

DerStig and Tuna, at the end of the day it's all moot anyway. Any video you shoot is to be communicated to others. Almost no one has enough bandwidth to stream 4K. Netflix for example requires a steady internet connection speed of 25Mbps to do it. The reality is that true 4K streaming can’t take place at even 12-15Mbps unless there is a 40% efficiency in encoding going from H.264 to HEVC and the content is 24/30 fps, not 60 fps. Once a true 60Mbps 4K video from a high quality camera is down scanned and re-encoded so it can be streamed to the masses who cares what it came from. Most of that great quality is lost unless all you plan on doing is viewing your own MP4s on your own PC.

To quote a portion of a Wired article -

"Even after the 4K streams have been optimized at the source, they’ll still require at least two to three times the bandwidth you’d need today to watch a 1080p HD feed. This is a problem that the industry can only solve by reorganizing its infrastructure, something that requires not only a significant capital investment, but also a lot of time. It will likely take years for cable providers to provision enough bandwidth on existing systems to deliver 4K video from the networks."

"Pulling in a 4K signal over the air should also be possible, but it will take years if it happens at all. First, major networks will need to decide to broadcast content in 4K and upgrade their equipment. Then they’ll need to get on the same page regarding next-generation broadcasting technologies."

I am more aware about distribution vs creation needs than anyone here. I do this for a living

You need to start with more then you distribute with. Distribution Mbps is moot when originating. 35Mbps is 1080p bluray distribution numbers. 4K is four 1080p streams meaning to have the same visual quality as the "inferior" 1080p you need 140Mbps. Distribution is forcing new compression schemes like H.265 but for now none of the SoC processors used have the computing power or the energy efficiency to be used in a small action camera.

The Key is to start with a High bandwidth source then you can compress for distribution. When we shoot for feature distribution or HD production we are using 12bit color depth not the 8bit that Yuneec, GoPro DJI and everyone else is. Compressing that down is always going to look better because you are starting with more information. Amazon has gotten smart in the pixel wars and has no problem with a 3.2K or 3.8K image shot 12Bit HDR uprezzed to 4K where Netflix only cares about pixel count and it shows in their original content. Case in point the new Amazon "The Grand Tour" was originated on Arri Alexa's and for location aerials they use the DJI Inspire with the X5r camera because nothing else has the image quality that will work for them.

Yuneec originally advertised 100Mbps. the CGO3+ actually tops out at 50Mbps in the heavily pre-processed "Gorgeous" mode. 40-42Mbps in "Natural" mode and 29-32Mbps in their interpretation of "Raw" (Actually a bad implementation of Log)

The GoPro uses a VBR (everyone does but Tuna doesn't understand that) and records all modes at 60Mbps on the Hero 4 Black the H4S tops out at 50Mbps. The Phantom 4 is 60Mbps so is the X5 (but it's a M43 and has the advantages of the larger sensor) The Mavic is also looking to be 60Mbps with a variable focus lens. All my numbers are from actual video tests of the cameras. The Yuneec also lacks dynamic range compared to the GoPro and the DJI cameras (about 2 1/2 stops less DR) which is why the other cameras do better with clouds

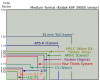

DerStig, I can't speak to the GoPro except to say it can't shoot in 4096x2160 resolution, it's maximum resolution is in the Ultra HD consumer format which has a slightly lower resolution of 3840x2160. Both the Phantom 4 4K camera and the CGO3+ use the same sensor -

DJI's website -

See that little tiny one below in the lower left of the sensor size diagram? Both the Phantom 4 and the CGO3+ use that one. The X5 uses the Four Thirds System but the Zenmuse X5 costs as much as my H Pro so I would hope it's better.

Sensor diagram by MarcusGR

You also said the Phantom 4 is 60Mbps, DJI says 60Mbps is the Max. Normal Mbps would be less than Max which makes sense. Either way pro-level cameras are considered to be those which record video at bit rates from 35mbps all the way up to 100mbps. For me they are both good cameras and by that definition are considered pro-level cameras.

As far as your dynamic range comment, dynamic range of a camera means nothing by itself. What matters is how well you can make the subject fit within the range of the camera. That's what makes a photographer a photographer. Knowing how to light your subject or how to adjust your camera is what matters.

I know that you need to start with higher to have a better quality lower, that's a given. As far as H.265? I'll worry about that when I can afford to shoot 8k.I am more aware about distribution vs creation needs than anyone here. I do this for a living

You need to start with more then you distribute with. Distribution Mbps is moot when originating. 35Mbps is 1080p bluray distribution numbers. 4K is four 1080p streams meaning to have the same visual quality as the "inferior" 1080p you need 140Mbps. Distribution is forcing new compression schemes like H.265 but for now none of the SoC processors used have the computing power or the energy efficiency to be used in a small action camera.

The Key is to start with a High bandwidth source then you can compress for distribution. When we shoot for feature distribution or HD production we are using 12bit color depth not the 8bit that Yuneec, GoPro DJI and everyone else is. Compressing that down is always going to look better because you are starting with more information. Amazon has gotten smart in the pixel wars and has no problem with a 3.2K or 3.8K image shot 12Bit HDR uprezzed to 4K where Netflix only cares about pixel count and it shows in their original content. Case in point the new Amazon "The Grand Tour" was originated on Arri Alexa's and for location aerials they use the DJI Inspire with the X5r camera because nothing else has the image quality that will work for them.

R

Rayray

Guest

D

DerStig

Guest

As far as your dynamic range comment, dynamic range of a camera means nothing by itself. What matters is how well you can make the subject fit within the range of the camera. That's what makes a photographer a photographer. Knowing how to light your subject or how to adjust your camera is what matters.

Sorry but that's just wrong. DR plays into EVERY shot. DR is not what makes a photographer a photographer. More DR the better the image is going to look. Pro level cameras START at 100Mbps and top out in the GBps range. 35Mbps is not considered a Pro level camera.

D

DerStig

Guest

Do not despair, DS, I know you know that I know you know.

Only you don't know

LOL!!Do not despair, DS, I know you know that I know you know.

Actually I believe you're incorrect, professional cameras with better interframe compression actually requires less bitrate not more. Compression is where its at now.Sorry but that's just wrong. DR plays into EVERY shot. DR is not what makes a photographer a photographer. More DR the better the image is going to look. Pro level cameras START at 100Mbps and top out in the GBps range. 35Mbps is not considered a Pro level camera.

Cameras which test with greater dynamic range aren't any better than other cameras. They simply have lower contrast. Which is best for you depends on what you shoot.

D

DerStig

Guest

Actually I believe you're incorrect, professional cameras with better interframe compression actually requires less bitrate not more. Compression is where its at now.

LOL compression is NOT where its at.

here are some Arri Raw data rates...

Bitrates

- 16:9 ARRIRAW has a bit rate of 1.34 Gigabit per second at 24 fps.

- 4:3 ARRIRAW has a bit rate of 1.79 Gbit/s at 24 fps.

- 4:3 Cropped ARRIRAW has a bit rate of 1.60 Gbit/s at 24 fps.

- Open Gate ARRIRAW hat a bit rate of 2.16 Gbit/s at 24 fps.

data rate

- 16:9 ARRIRAW is 7 Megabytes per frame. At 24 fps, this results in 168 Megabytes of data per second or 605 Gigabytes per hour.

- 4:3 ARRIRAW is 9.33 MB/frame; 224 MB/s and 806 GB/h at 24 fps.

- 4:3 Cropped ARRIRAW is 8,35 MB/frame and 722 GB/h at 24 fps.

- Open Gate ARRIRAW is 11,26 MB/frame and 972,5 GB/h.

Wow, ok you won. Totally off the rails with this one. You found a camera that went totally out of the context of drone photography to a 6 figure camera used in high budget feature films that would require a full size helicopter to obtain the same shots I can get from my drone. So if this is what you do and you have access to such equipment, why are you bothering to muck about here with the likes of us? Makes no sense at all.LOL compression is NOT where its at.

here are some Arri Raw data rates...

Bitrates

- 16:9 ARRIRAW has a bit rate of 1.34 Gigabit per second at 24 fps.

- 4:3 ARRIRAW has a bit rate of 1.79 Gbit/s at 24 fps.

- 4:3 Cropped ARRIRAW has a bit rate of 1.60 Gbit/s at 24 fps.

- Open Gate ARRIRAW hat a bit rate of 2.16 Gbit/s at 24 fps.

data rate

- 16:9 ARRIRAW is 7 Megabytes per frame. At 24 fps, this results in 168 Megabytes of data per second or 605 Gigabytes per hour.

- 4:3 ARRIRAW is 9.33 MB/frame; 224 MB/s and 806 GB/h at 24 fps.

- 4:3 Cropped ARRIRAW is 8,35 MB/frame and 722 GB/h at 24 fps.

- Open Gate ARRIRAW is 11,26 MB/frame and 972,5 GB/h.

D

DerStig

Guest

Wow, ok you won. Totally off the rails with this one. You found a camera that went totally out of the context of drone photography to a 6 figure camera used in high budget feature films that would require a full size helicopter to obtain the same shots I can get from my drone. So if this is what you do and you have access to such equipment, why are you bothering to muck about here with the likes of us? Makes no sense at all.

No that's what an Alexa Mini ($45K, not six figures) does and it can fly in a M600. That's what Pro gear does and what we use daily in TV and Feature film work. But hey keep telling me what we use and that "Compression is where it's at"

D

DerStig

Guest

I think what DerStig is trying to say is that compression is more important than DR and bitrate. Thanks for straightening everything out it gets very confusing at times.

No I'm saying that lack of compression and Higher dynamic range make for a better image. Bitrate is a function of compression and color depth

D

DerStig

Guest

So a compressed bitrate in the color depth increases dynamic range?

No

D

DerStig

Guest

Ok you lost me, So you aren't saying higher dynamic range and increased color depth is a product of less compression?

No

Similar threads

- Replies

- 12

- Views

- 4K

- Replies

- 26

- Views

- 4K

- Replies

- 9

- Views

- 3K

- Replies

- 4

- Views

- 2K

- Replies

- 18

- Views

- 12K